It’s no secret that collaboration is the bedrock of business. In fact, a Stanford University study demonstrated that merely priming employees to act in a collaborative fashion — without changing their environment or workflow — makes them more engaged, more persistent, more successful, and less fatigued.

To digitally optimize this biologically ingrained capacity for teamwork, businesses the world over have adopted Software as a Service (SaaS) applications that facilitate the sharing of information between multiple users. Run via centralized, cloud-hosted data centers rather than on local hardware, such applications offer financial and technical benefits to companies of all sizes, from storage savings to reliable connectivity to support speed. Yet it is their collaborative nature that has positioned SaaS software at the heart of the modern enterprise.

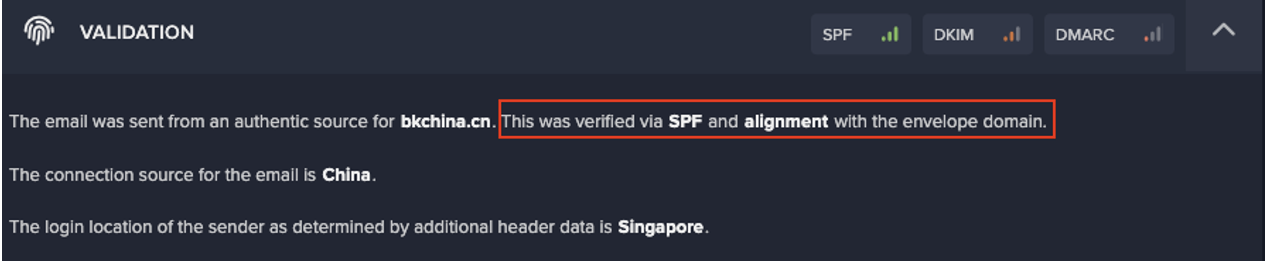

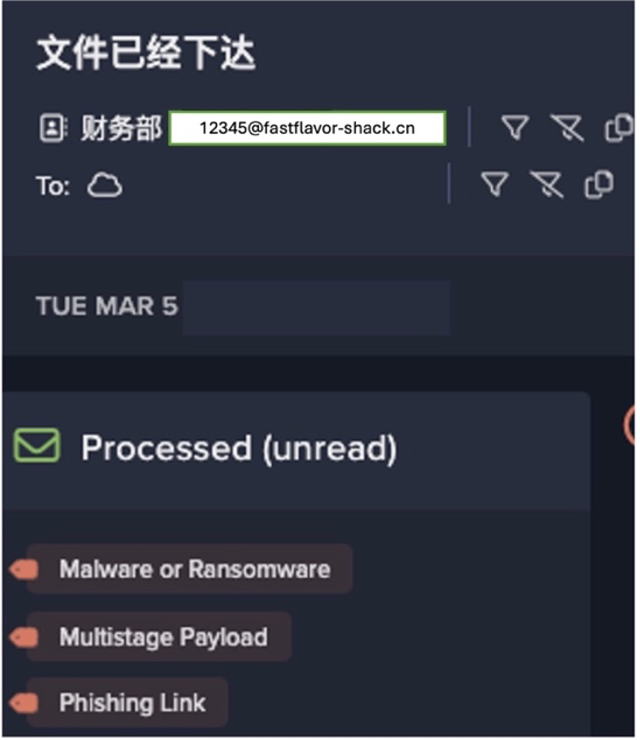

At the same time, the interactivity of cloud services renders them an attractive target for advanced cyber-criminals, who can often leverage a single user’s credentials to compromise dozens of other accounts. And while leading vendors conform to high security standards, the cyber defenses they employ nonetheless have a common weakness: human error on the customer end. By launching sophisticated attacks like those in the case studies below, today’s threat actors are increasingly gaining access to cloud services through the front door, necessitating a fundamentally different security approach that can detect when credentialed users behave — ever so slightly — out of character.

SaaS security issues: Sensitive file access

Among the key challenges is balancing the convenience of open access to information with the imperative of protecting privileged assets. Indeed, with hundreds or even thousands of employees sharing a welter of files and databases at all times, safeguarding SaaS applications against insider threat is extraordinarily difficult with traditional security tools, which use fixed rules and signatures to catch only known, external cyber-attacks. Rather, detecting when credentialed users enter parts of these applications where they don’t belong requires AI security systems that understand their typical online behavior well enough to spot subtle anomalies. And as employees’ responsibilities and privileges inevitably change, such systems must be able to adapt while ‘on the job’.

The necessity of this AI-driven approach to cyber defense recently came to light when Darktrace detected a serious threat on the network of a European bank. After stealing credentials or otherwise gaining access, cyber-criminals will frequently run scripts to identify files containing keywords like “password.” Such was the case with the attackers that Darktrace thwarted, who had managed to find an Office 365 SharePoint file that stored unencrypted passwords. As they had already breached the network, the attackers could have reasonably expected to be in the clear — having already successfully bypassed any conventional security controls.

However, while these attackers would likely have exploited the cleartext passwords to escalate their privileges and further infiltrate the organization, Darktrace AI flagged the activity as anomalous for the bank’s particular network because it breached the following model: “Unusual SaaS Sensitive File Access.” Ultimately, the AI’s nuanced and evolving understanding of what constitutes “unusual” behavior for each of the bank’s users and devices proved critical, given that the suspicious file access may well have been benign in other circumstances.

Social engineering attacks

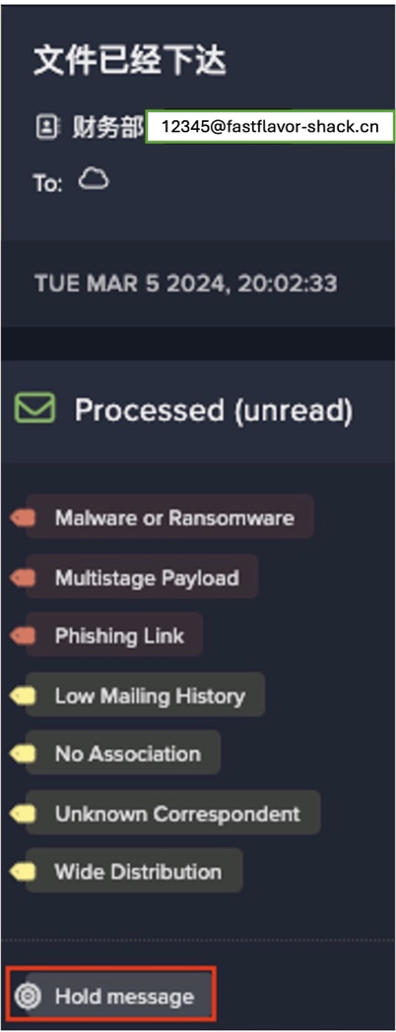

Perhaps the most difficult cloud-based attacks to counter are those that rely on social engineering, since they involve deceiving employees into handing over their credentials and other lucrative information voluntarily. In these cases, AI anomaly detection is the optimal security strategy, as thwarting a social engineering threat before it’s too late means protecting employees from their own mistakes.

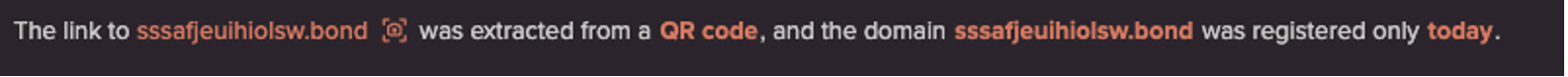

In 2018, Darktrace detected a device on the network of a UK property development company that had attempted to connect to a rare external domain — two seconds after landing on office365.com. The domain had a suspicious name and offered HTTP connections to a form containing sensitive data transmitted in plain text, which would be vulnerable to a man-in-the-middle (MITM) attack. Further investigation indicated that an employee at the property development company had been tricked by a shortened URL in a phishing email to visit the suspicious domain, showing the legitimate looking Office 365 login page below:

Despite the user actively clicking on the URL to visit the page, Darktrace flagged the event as threatening due to the rarity of the destination domain in comparison to company’s normal network activity. Artificial intelligence has consistently demonstrated this ability to provide a safety net for human error — flagging anomalous connections and rare domains regardless of how well they may be disguised to the unsuspecting user.

SaaS security solutions

From social engineering attacks to insider threats to stolen credentials, the inherent risks are largely user-dependent. As a consequence, any security tool up to the task of defending these applications must understand how these users work, evolve, and collaborate.

Indeed, it is precisely the sought-after interconnectedness and collaborative nature of cloud platforms which makes the potential reward for attackers so great, as a single breach could allow them to compromise an entire company. Yet the efficiencies promised need not come at the cost of security, since the latest AI cyber defenses shine a light on even the most remote corners of the cloud.